Up to the mark

-

- from Shaastra :: vol 05 issue 04 :: Apr 2026

AI helps bridge learning gaps. But does it pass muster as the examiner of test papers?

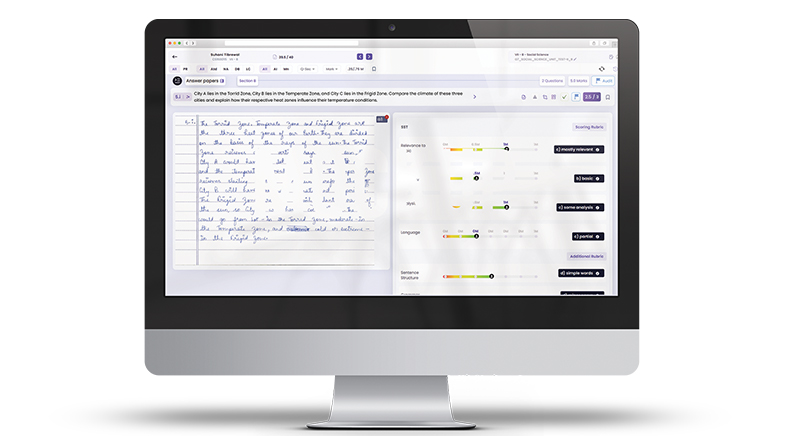

The poet got it wrong: March, and not April, is the cruellest month, at least in some circles. It is examination time, which translates into acute stress for most students. For teachers, too, the season is taxing, as they have the arduous job of checking mounds of papers. There is, however, peace in some little pockets. Lynette Basnet and her colleagues at CS Academy, a school in Kovaipudur, Coimbatore, for instance, have had a relatively relaxed March: artificial intelligence (AI) has shouldered a major part of their evaluation and assessment responsibilities. The school scanned and uploaded students' answer papers, and an AI tool, DeepGrade, developed by Chennai-based start-up Smartail, automated the process of checking and evaluating the descriptive answers and provided educators with an assessment for each student. "Personal accountability can never be taken away, no matter what tool we use, but it does ease the burden of physical or manual correction," says Basnet. A social science teacher at the school with over 28 years' teaching experience, she has been working with Smartail's team for better answer evaluations by AI.

AI is now expanding its footprint from personalised learning to personalised assessment. It can, for one, swiftly evaluate answers.

AI is now expanding its footprint from personalised learning to personalised assessment. It can, for one, swiftly evaluate volumes of answers. Further, the evaluation is unbiased. DeepGrade uses a rubric — a scoring guide — to evaluate each answer. Also, the assessment and performance insights help students navigate problems in understanding, and teachers to develop strategies to fill the learning gaps. Some tools also help students prepare for entrance examinations.

Start-ups such as Vadodara-based EdOptimize, Pune-based EduSageAI and Mumbai-based Exam Grader have also developed AI tools that evaluate descriptive answers. Smartail currently provides services to 18 schools and plans to tweak its AI system to suit law universities, engineering colleges and medical colleges. It also aims to train the system to understand multiple languages.

Smartail's Chief Executive Officer, Swaminathan Ganesan, conceived the idea of developing such a tool when his friend, Jay Murugan Subbiah, Principal of Jay International School in Salem, mentioned the immense workload of teachers during a casual conversation in 2019. Evaluating students and their answer papers was especially onerous. Ganesan, with a degree in Computer Software Engineering, wondered if AI could help teachers cope with evaluating answer sheets.

The first step was to develop a tool to read students' disparate handwritings. For this, he partnered with about 10 schools, which provided his team with students' answer sheets to train the AI model. Illegible handwriting was not a problem: the start-up claims that if a teacher could read a student's scrawl, so could their tool. Next, the team decided to train AI to assess an answer by providing it with the same answer graded by 100 teachers. "That was the place where we burnt our fingers," he says. "We had no idea of why our model was giving a particular mark for this particular answer." To make the assessment more objective, they adopted a rubric-based assessment system, where every answer was broken into components such as content accuracy, reasoning, structure, and clarity. The marks were assigned as per the rubric.

The tool uses Bloom's Taxonomy — a system that classifies learning from basic knowledge recall to higher-order thinking — to analyse exam questions and answers so that teachers can evaluate not just the marks, but the level of thinking students demonstrate. As AI in evaluation is just making headway, educators such as Basnet are treading with caution, suggesting changes in the tool where needed to ensure reliability.

While the objective method of assessment and the adoption of OMR (Optical Mark Recognition) sheets have solved the problem of entrance exam evaluation, unbiased assessment remains a major concern, especially in school-leaving board examinations in India, where the sheer volume of students sitting for such tests does not allow for consistent, unbiased checking of answer sheets. AI evaluation tools may now streamline this process. With AI taking over the tedious task of manually checking descriptive answer sheets, teachers are now free to do what they love the most — to teach.

See also:

Have a

story idea?

Tell us.

Do you have a recent research paper or an idea for a science/technology-themed article that you'd like to tell us about?

GET IN TOUCH